In the rapidly evolving landscape of artificial intelligence (AI), the integration of robust governance frameworks is paramount. As AI systems become more sophisticated and pervasive, the need for AI Governance Contextual Validation has never been more critical. This process ensures that AI applications are not only technically sound but also ethically responsible, transparent, and aligned with societal values. This blog post delves into the intricacies of AI governance, the importance of contextual validation, and the steps involved in implementing effective AI governance strategies.

Understanding AI Governance

AI governance refers to the policies, procedures, and frameworks that guide the development, deployment, and use of AI technologies. It encompasses a wide range of considerations, including ethical standards, legal compliance, and technical robustness. Effective AI governance is essential for building trust among stakeholders, mitigating risks, and ensuring that AI systems operate in the best interests of society.

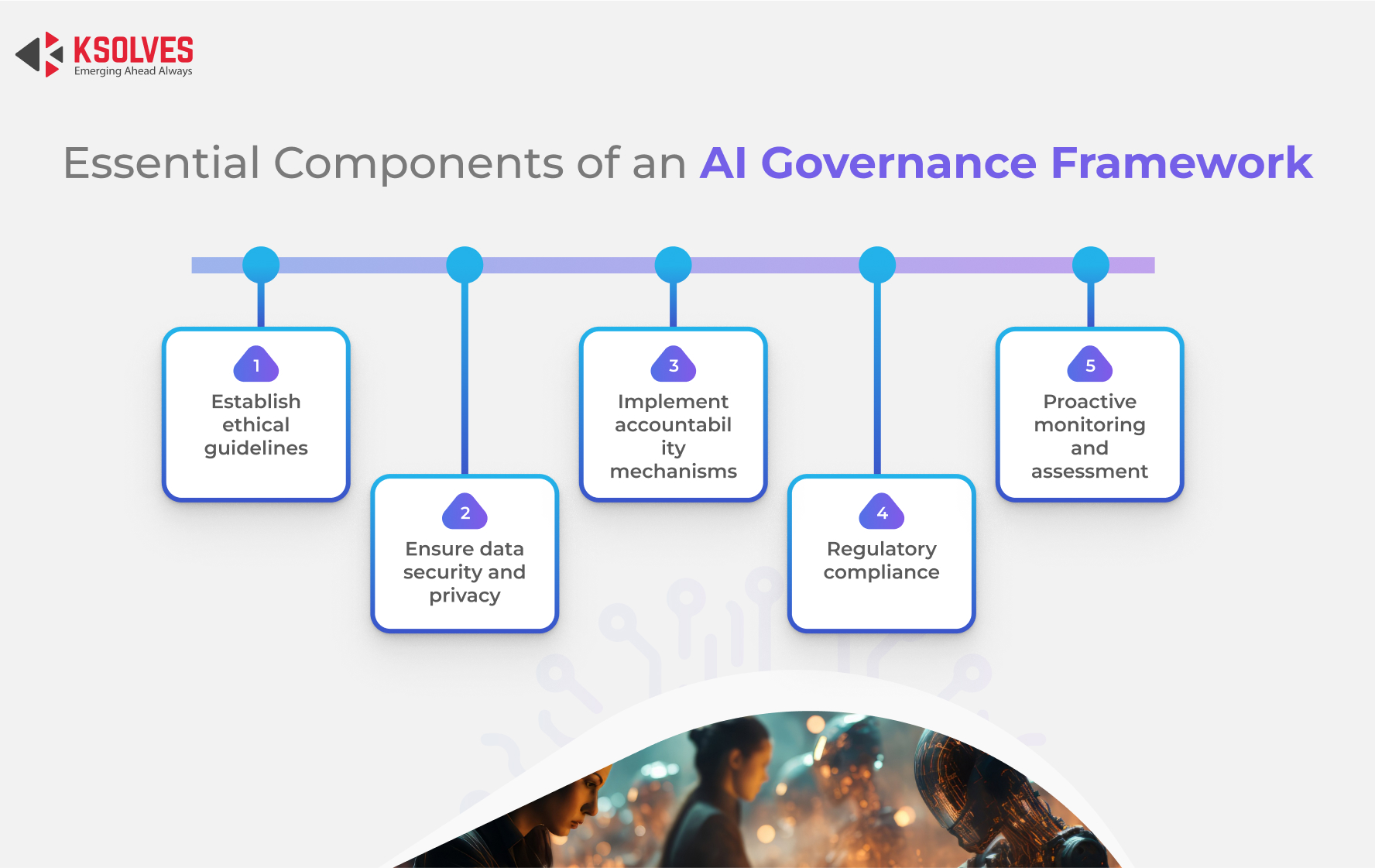

Key components of AI governance include:

- Ethical guidelines: Ensuring that AI systems are designed and used in a manner that respects human rights and ethical principles.

- Legal compliance: Adhering to relevant laws and regulations, such as data protection laws and industry-specific standards.

- Transparency and accountability: Making AI systems transparent and accountable, so that their decisions can be understood and challenged if necessary.

- Risk management: Identifying and mitigating potential risks associated with AI, such as bias, discrimination, and security vulnerabilities.

The Role of Contextual Validation in AI Governance

Contextual validation is a crucial aspect of AI governance that ensures AI systems are evaluated within the specific contexts in which they will be deployed. This involves assessing the cultural, social, and environmental factors that may influence the performance and impact of AI technologies. By validating AI systems in context, organizations can better understand how these systems will interact with real-world scenarios and address any potential issues proactively.

Contextual validation helps in several ways:

- Identifying cultural biases: Ensuring that AI systems do not perpetuate or amplify existing biases and discriminations.

- Adapting to local regulations: Tailoring AI solutions to comply with local laws and regulations, which may vary significantly across different regions.

- Enhancing user trust: Building trust among users by demonstrating that AI systems are designed with their specific needs and concerns in mind.

- Improving system performance: Ensuring that AI systems perform optimally in the intended environment, taking into account factors such as language, customs, and infrastructure.

Steps for Implementing AI Governance Contextual Validation

Implementing AI Governance Contextual Validation involves a systematic approach that includes several key steps. These steps ensure that AI systems are thoroughly evaluated and validated within their intended contexts, thereby enhancing their reliability and acceptability.

1. Define Clear Objectives and Scope

The first step in implementing AI governance contextual validation is to define clear objectives and scope. This involves identifying the specific AI applications to be validated, the contexts in which they will be deployed, and the key performance indicators (KPIs) that will be used to measure success. Clear objectives and scope provide a roadmap for the validation process and ensure that all stakeholders are aligned.

2. Conduct a Thorough Risk Assessment

Before deploying AI systems, it is essential to conduct a thorough risk assessment. This involves identifying potential risks and vulnerabilities associated with the AI application, such as data privacy concerns, security threats, and ethical issues. A comprehensive risk assessment helps in developing mitigation strategies and ensuring that the AI system is robust and secure.

3. Develop Context-Specific Guidelines

Based on the risk assessment, develop context-specific guidelines that outline the ethical, legal, and technical requirements for the AI system. These guidelines should be tailored to the specific context in which the AI will be deployed, taking into account cultural, social, and environmental factors. Context-specific guidelines ensure that the AI system is aligned with local values and regulations.

4. Implement Transparent and Accountable Processes

Transparency and accountability are fundamental to effective AI governance. Implement processes that ensure the AI system's decisions are transparent and can be audited. This includes documenting the decision-making processes, providing clear explanations for AI-generated outcomes, and establishing mechanisms for accountability. Transparent and accountable processes build trust among stakeholders and enhance the credibility of the AI system.

5. Conduct Pilot Testing and Validation

Before full-scale deployment, conduct pilot testing and validation in the intended context. This involves deploying the AI system in a controlled environment and monitoring its performance against the defined KPIs. Pilot testing helps in identifying any issues or gaps in the AI system and allows for necessary adjustments before full deployment. It also provides valuable insights into the system's real-world performance and user acceptance.

6. Gather and Analyze Feedback

During the pilot testing phase, gather feedback from users and stakeholders. This feedback is crucial for understanding the system's strengths and weaknesses and for making necessary improvements. Analyze the feedback to identify patterns, trends, and areas for enhancement. Use this information to refine the AI system and ensure it meets the needs and expectations of its users.

7. Continuous Monitoring and Evaluation

AI governance is an ongoing process that requires continuous monitoring and evaluation. Even after full deployment, it is essential to monitor the AI system's performance and impact. Regularly evaluate the system against the defined KPIs and make necessary adjustments to ensure it remains effective and aligned with evolving contexts and requirements. Continuous monitoring and evaluation help in maintaining the system's reliability and acceptability over time.

🔍 Note: Continuous monitoring and evaluation should involve both technical and non-technical stakeholders to ensure a comprehensive assessment of the AI system's performance and impact.

Challenges in AI Governance Contextual Validation

While AI Governance Contextual Validation is essential for ensuring the responsible and effective use of AI, it also presents several challenges. Some of the key challenges include:

- Complexity and diversity of contexts: AI systems may be deployed in a wide range of contexts, each with its unique cultural, social, and environmental factors. Validating AI systems in such diverse contexts can be complex and resource-intensive.

- Rapid technological advancements: AI technologies are evolving rapidly, making it challenging to keep governance frameworks up-to-date. Continuous adaptation and updating of governance practices are necessary to keep pace with technological advancements.

- Balancing innovation and regulation: Striking a balance between fostering innovation and ensuring regulatory compliance can be challenging. Overly stringent regulations may stifle innovation, while lax regulations may lead to unethical or harmful AI applications.

- Data privacy and security concerns: AI systems often rely on large amounts of data, raising concerns about data privacy and security. Ensuring that data is handled responsibly and securely is a critical aspect of AI governance.

Best Practices for Effective AI Governance

To overcome the challenges and ensure effective AI governance, organizations can adopt several best practices. These best practices provide a framework for implementing robust AI governance strategies and ensuring that AI systems are validated within their intended contexts.

Some best practices include:

- Establishing a cross-functional governance team: Create a team comprising experts from various disciplines, including ethics, law, technology, and user experience. This team should be responsible for developing and implementing AI governance frameworks.

- Adopting a risk-based approach: Prioritize governance efforts based on the level of risk associated with different AI applications. Focus on high-risk areas to ensure that critical issues are addressed promptly.

- Fostering a culture of ethical responsibility: Promote a culture within the organization that values ethical responsibility and transparency. Encourage employees to consider the ethical implications of their work and to act in the best interests of society.

- Engaging with stakeholders: Involve stakeholders, including users, regulators, and community members, in the AI governance process. Their input is valuable for understanding the system's impact and ensuring it meets their needs and expectations.

- Continuous learning and improvement: Stay updated with the latest developments in AI governance and continuously improve governance practices. Encourage a culture of learning and adaptation to keep pace with technological advancements and evolving contexts.

Case Studies in AI Governance Contextual Validation

Several organizations have successfully implemented AI Governance Contextual Validation to ensure the responsible and effective use of AI. These case studies provide valuable insights into the practical application of AI governance principles and the benefits of contextual validation.

Case Study 1: Healthcare AI Systems

In the healthcare sector, AI systems are used for various applications, including disease diagnosis, treatment recommendations, and patient monitoring. Ensuring the ethical and responsible use of AI in healthcare is crucial for patient safety and trust. A leading healthcare provider implemented AI governance contextual validation by:

- Conducting a thorough risk assessment to identify potential ethical and legal issues.

- Developing context-specific guidelines tailored to the healthcare environment, including data privacy and security requirements.

- Implementing transparent and accountable processes, such as documenting decision-making and providing clear explanations for AI-generated outcomes.

- Conducting pilot testing and validation in real-world healthcare settings to gather feedback and make necessary adjustments.

The healthcare provider's AI governance framework ensured that AI systems were validated within the specific context of healthcare, enhancing patient trust and safety.

Case Study 2: Financial Services AI Applications

In the financial services industry, AI is used for fraud detection, credit scoring, and personalized financial advice. Ensuring the ethical and responsible use of AI in finance is essential for maintaining customer trust and regulatory compliance. A major financial institution implemented AI governance contextual validation by:

- Establishing a cross-functional governance team comprising experts from ethics, law, technology, and finance.

- Adopting a risk-based approach to prioritize governance efforts based on the level of risk associated with different AI applications.

- Fostering a culture of ethical responsibility and transparency within the organization.

- Engaging with stakeholders, including customers and regulators, to gather feedback and ensure the AI system meets their needs and expectations.

The financial institution's AI governance framework ensured that AI applications were validated within the specific context of finance, enhancing customer trust and regulatory compliance.

Future Directions in AI Governance

As AI technologies continue to evolve, the field of AI governance will also need to adapt and evolve. Future directions in AI governance may include:

- Developing standardized frameworks and guidelines for AI governance that can be applied across different industries and contexts.

- Enhancing collaboration and knowledge sharing among organizations, regulators, and stakeholders to promote best practices in AI governance.

- Leveraging advanced technologies, such as blockchain and federated learning, to enhance transparency, security, and accountability in AI systems.

- Promoting ethical AI research and development to ensure that AI technologies are designed and used in a manner that respects human rights and societal values.

By embracing these future directions, organizations can ensure that AI governance remains effective and relevant in the rapidly evolving landscape of AI technologies.

AI governance contextual validation is a critical aspect of ensuring the responsible and effective use of AI. By validating AI systems within their intended contexts, organizations can enhance their reliability, acceptability, and alignment with societal values. Implementing effective AI governance strategies involves a systematic approach that includes defining clear objectives, conducting risk assessments, developing context-specific guidelines, and continuously monitoring and evaluating AI systems. By adopting best practices and learning from successful case studies, organizations can overcome the challenges of AI governance and ensure that AI technologies are used for the benefit of society.

As AI continues to transform various industries and aspects of life, the importance of AI Governance Contextual Validation will only grow. Organizations that prioritize AI governance and contextual validation will be better positioned to harness the power of AI responsibly and ethically, building trust among stakeholders and driving innovation for the greater good.

Related Terms:

- ai governance framework

- responsible ai governance examples

- artificial intelligence governance

- responsible ai governance framework

- responsible ai governance

- Related searches ai governance examples